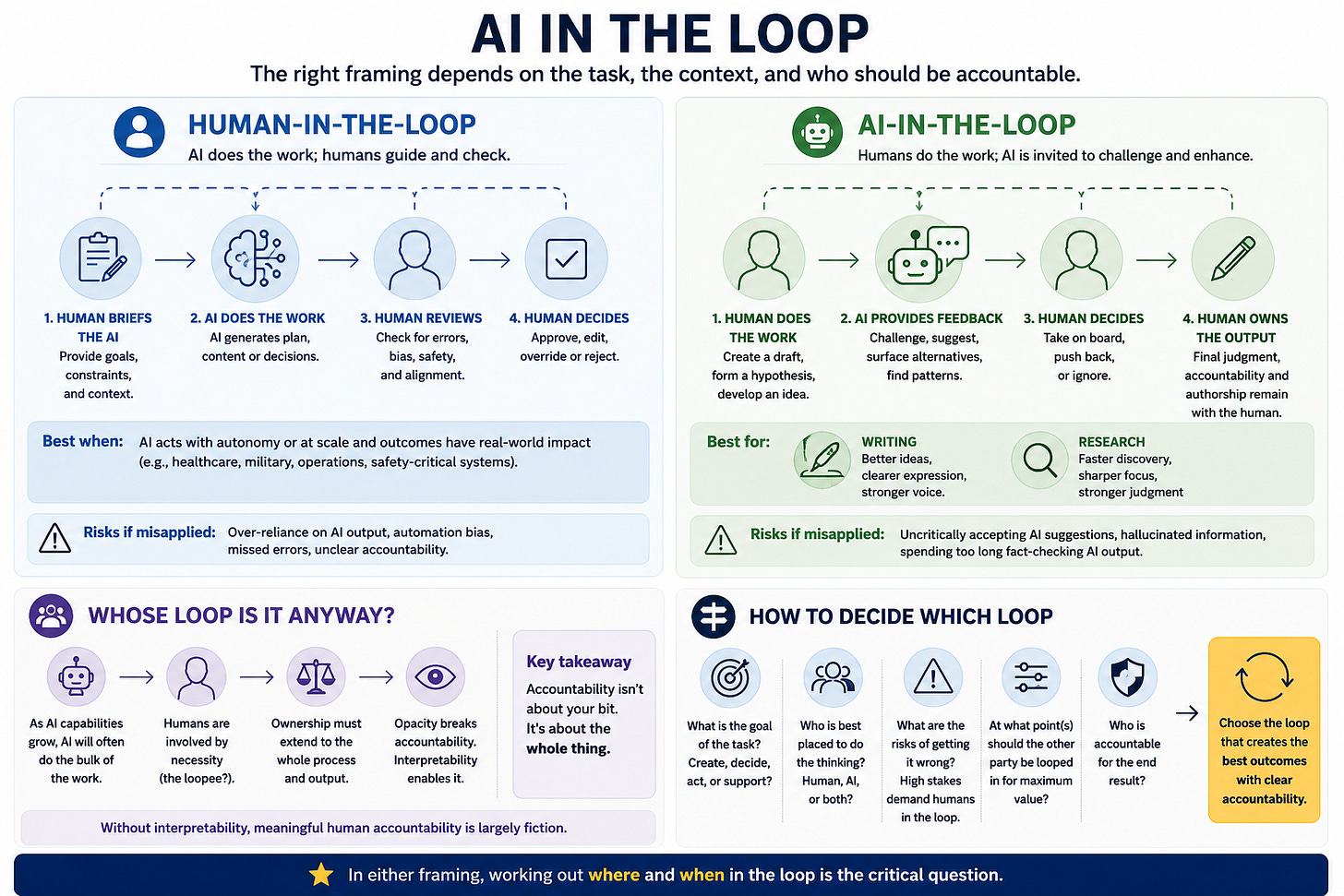

AI in the loop

Why human-in-the-loop isn't always the right framing

‘Human-in-the-loop’ is an established term for having a person looped into the development and operation of AI systems in order to guide or override the AI’s decision-making.

It’s a critical principle and one I always strongly advise my clients to follow. As agentic AI (AI that can take action, not just chat) becomes more prevalent, humans not being in the loop at the right moment can have serious consequences (see last week’s cautionary tale).

In certain contexts (healthcare, the military), it can literally be a matter of life and death (an explicit commitment to it in a military context is one of the red lines Anthropic recently drew with Trump’s Department of War on the basis that “frontier AI systems are simply not reliable enough to power fully autonomous weapons”).

However, human-in-the-loop risks framing the relationship between humans and AI in a singular and potentially reductive way, where AI always does the bulk of the thinking and the human’s role is to brief the AI and then check its output for mistakes.

In practice, I think there are scenarios, particularly in creative domains, where it’s more helpful to frame AI as the party being kept in the loop. For example:

Writing

I’ve written before about how I use AI for writing “as an always-available, affordable and tireless editor, proofreader and sounding board”. I write a draft, which an AI assistant (usually Claude) gives me feedback on. I then decide what feedback to take on board and what to push back on / ignore. I remain in the driving seat and fully accountable for what I’ve written. The AI is in the loop, invited to challenge and suggest improvements, and I believe my writing is all the better for that (you should see it before AI feeds back…)

I’d love it (and think the internet would be a better place) if more people kept AI in the loop when they were writing, rather than looping themselves in (at the beginning and - if we’re lucky - the end) of an AI-led writing process. This is the thinking behind my vibe-coded ‘Should I post this on LinkedIn?’ tool.

I’d also love it if we went back to judging writing based on whether it was thought-provoking and/or emotionally resonant rather than obsessing over whether or not it has the hallmarks of AI assistance. My biggest problem with the AI-written posts now flooding LinkedIn is that they are soulless and boring, not that they have em-dashes in them.

Research

AI tools can be invaluable for research, but an ‘AI-in-the-loop’ mindset is helpful here too.

Looping AI into a research task often dramatically compresses what would be days or weeks of searching into a single prompt - useful for working out where to dig. But it doesn’t negate the need to assess primary sources first-hand or to shape findings into recommendations you can actually stand behind.

The risk of a human-in-the-loop approach to research is that you end up either inadvertently sharing hallucinated information or spending your life fact-checking AI-generated output.

Whose loop is it anyway?

As agentic AI becomes more capable, there will be more scenarios where AI inevitably does the bulk of the work and humans are necessarily the ones being looped in (the loopee?). At that point, the question of who owns the loop - and who is accountable for its outputs - becomes paramount.

Humans can’t just be responsible for ‘their bit’ of an AI-heavy process. Ownership has to extend to the whole thing. That’s a harder ask when the AI model’s reasoning is opaque, and it highlights something AI companies will need to devote more attention to: interpretability. Understanding how models actually arrive at their outputs has mostly been an academic concern so far. As AI takes on more consequential work, it will become a commercial one too - because without it, meaningful human accountability is largely fiction.

In the meantime, I’d suggest it’s worth considering whether any given task is best approached as 'human-in-the-loop’ or ‘AI-in-the-loop’. In either framing, working out where and when in the loop is the critical question.

Postscript: I asked ChatGPT to create a diagrammatic image to accompany this post. Here’s what it came up with: