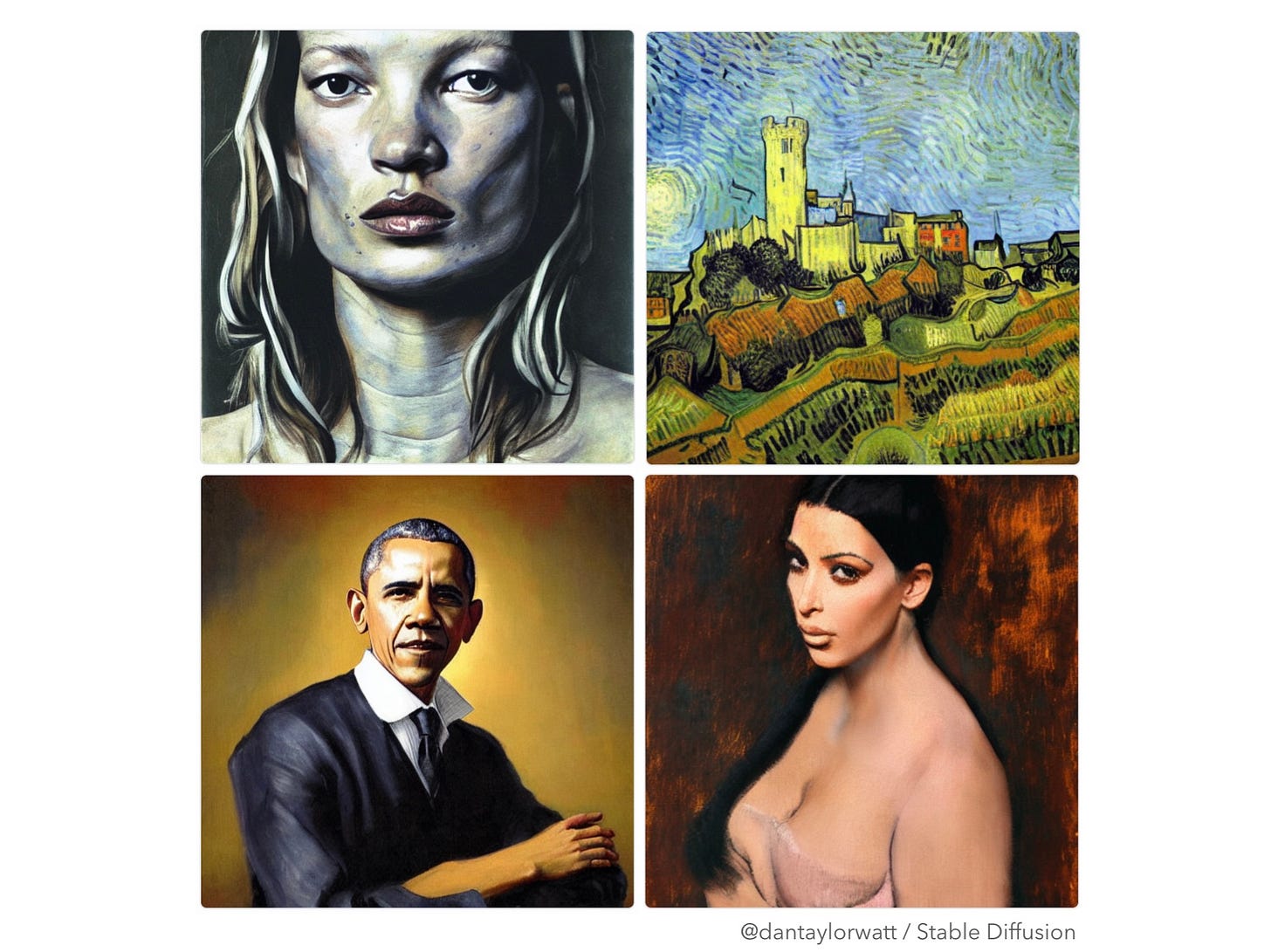

Q: What do the four works of art pictured above all have in common?

A: They were all created yesterday by AI responding to short prompts* written by me.

More specifically, they were generated using open source text-to-image (aka image synthesis) model Stable Diffusion, which was released to the public a few weeks ago.

It’s not the first image synthesis model (see OpenAI’s DALL-E 2, Google’s Parti and Imagen, Meta’s Make-A-Scene, Midjourney). However, it’s the first to be made freely available on an open-source basis, opening it up to many more users.

You currently need a decent graphics processing unit (GPU) and a fair-degree of tech smarts to get it up and running locally on your computer. However, there’s an online demo version that just requires you to wait a couple of minutes for your turn on a remote GPU.

And it will become more accessible. It won’t be long before you can create images from text (or voice) prompts instantly on your phone.

Whilst the company releasing Stable Diffusion, Stability AI, has attempted to put safeguards in place (e.g. watermarking, NSFW filtering), the fact it’s open-source means new versions have already been created which remove those safeguards.

The blog post announcing the public release, penned by Founder Emad Mostaque, optimistically states “We hope everyone will use this in an ethical, moral and legal manner”. Unfortunately, the history of all technological developments to date suggests it won’t play out that way.

Unsurprisingly, AI-generated digital art is already causing significant controversy in the art world. But what does more accessible image synthesis mean for the wider world of media?

Firstly, many more convincing AI-generated images bouncing around the Internet. You no longer need any design skills to create an artificial and potentially misleading image - you just need to pick the right prompt words.

Whilst some very smart people are working on how to quickly and reliably verify the authenticity of digital images, social media doesn’t have a habit of waiting and checking and we should expect to see many more fake images in our social feeds and on less rigorous news sites.

This isn’t a new problem. But more accessible image synthesis is definitely going to make it worse.

And it’s not just static images of course. The same technologies can be applied to generate video. The verisimilitude isn’t there for video yet, but it will come.

It’s easy to jump from here to a dystopian vision of inane, mind-rotting, hateful media being churned out by bias-trained AI. Whilst I have no doubt such content will exist (as it does today, largely generated by humans without the assistance of AI), I don’t believe AI-generated media will dominate. Instead, I anticipate it will become another (admittedly very powerful) tool in the toolbox of content creators, used for inspiration, for inclusion in wider pieces and at times, to standalone.

Much as photography didn’t render drawing or painting redundant and CGI didn’t kill live action video production, AI-generated media won’t obliterate media lovingly crafted by humans.

However, it will put pressure on some digital creators and it will necessitate we all adopt a new level of scepticism when assessing the provenance and authenticity of digital media.

*The prompts which generated these 4 images: ‘Kate Moss painted by Lucien Freud’, ‘Lewes Castle painted by Van Gogh’, ‘Barack Obama painted by Rembrandt’, ‘Kim Kardashian painted by Degas’.